Jules S. Damji on LinkedIn: Introducing RLlib Multi-GPU Stack for Cost Efficient, Scalable, Multi-GPU…

DeepSpeed: Accelerating large-scale model inference and training via system optimizations and compression - Microsoft Research

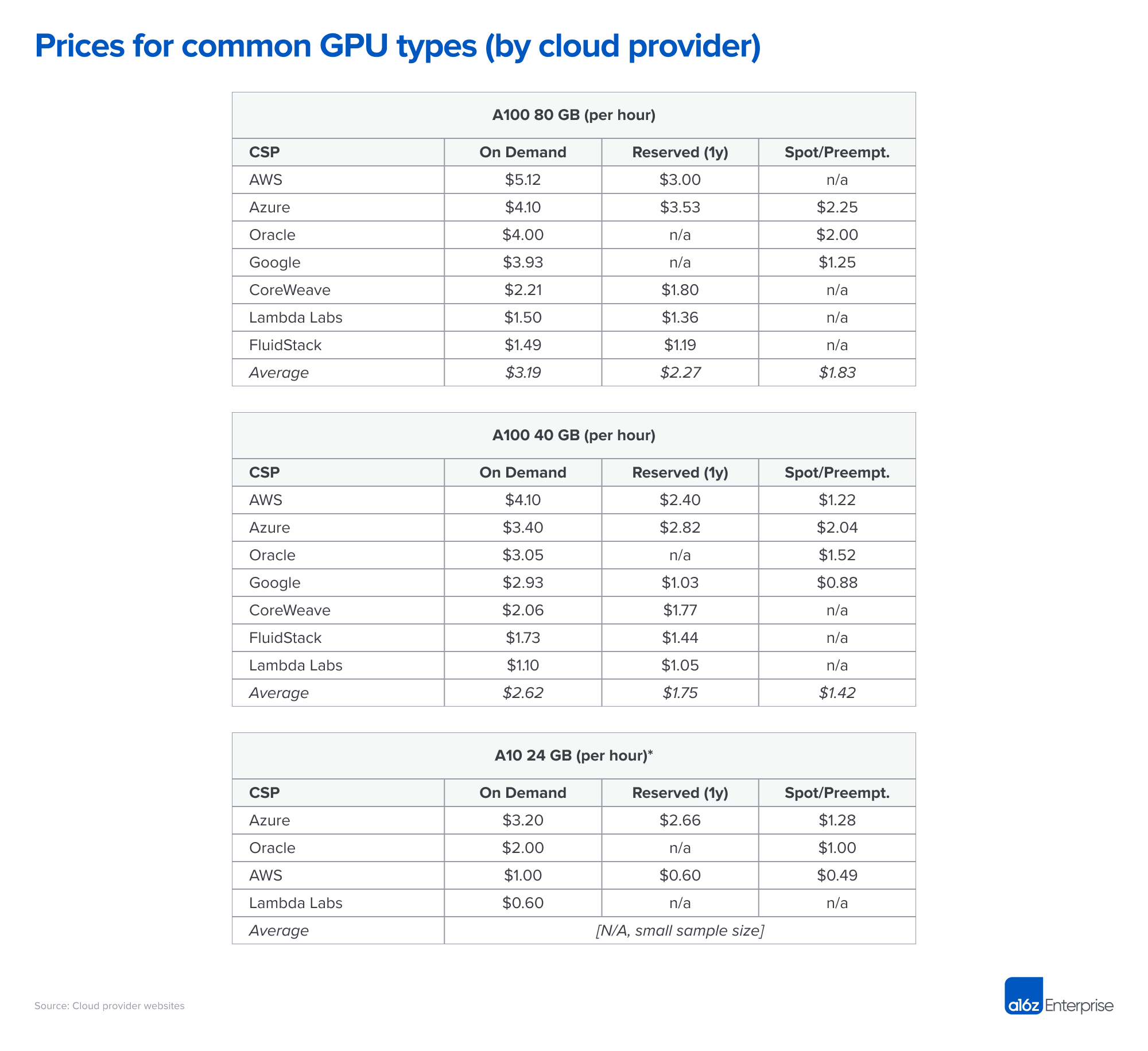

Which vm instance can I start to run llama 70 B parameter? Which would be cost efficient? what ram? whether gpu or cpu? how many cpus? : r/LocalLLaMA